How to effectively evaluate your RAG + LLM applications

Hey there! Ever wondered how some of today's applications seem almost magically smart? A big part of that magic comes from something called RAG and LLM. Think of RAG (Retrieval-Augmented Generation) as the brainy bookworm of the AI world. It digs through tons of information to find exactly what's needed for your question. Then, there's LLM (Large Language Model), like the famous GPT series will generate a smooth answer based on its impressive text generation capabilities. With these two together, you've got an AI that's not just smart but also super relevant and context-aware. It's like combining a super-fast research assistant with a witty conversationalist. This combo is fantastic for anything from helping you find specific information quickly to having a chat that feels surprisingly real.

But here's the catch: How do we know if our AI is actually being helpful and not just spouting fancy jargon? That's where evaluation comes in. It's crucial, not just a nice-to-have. We need to make sure our AI isn't just accurate but also relevant, useful, and not going off on weird tangents. After all, what's the use of a smart assistant if it can't understand what you need or gives you answers that are way off base?

Evaluating our RAG + LLM application is like a reality check. It tells us if we're really on track in creating an AI that's genuinely helpful and not just technically impressive. So, in this post, we're diving into how to do just that – ensuring our AI is as awesome in practice as it is in theory!

Development Phase

In the development phase, it's essential to think along the lines of a typical machine learning model evaluation pipeline. In a standard AI/ML setup, we usually work with several datasets, such as development, training, and testing sets, and employ quantitative metrics to gauge the model's effectiveness. However, evaluating Large Language Models (LLMs) presents unique challenges. Traditional quantitative metrics struggle to capture the quality of output from LLMs because these models excel in generating language that's both varied and creative. Consequently, it's difficult to have a comprehensive set of labels for effective evaluation.

In academic circles, researchers might employ benchmarks and scores like MMLU to rank LLMs, and human experts may be enlisted to evaluate the quality of LLM outputs. These methods, however, do not seamlessly transition to the production environment, where the pace of development is rapid, and practical applications require immediate results. It's not solely about LLM performance; the real-world demands take into account the entire process, which includes data retrieval, prompt composition, and the LLM's contribution. Crafting a human-curated benchmark for every new system iteration or when there are changes in documents or domains is impractical. Furthermore, the swift pace of development in the industry doesn't afford the luxury of lengthy waits for human testers to evaluate each update before deployment. Therefore, adjusting the evaluation strategies that work in academia to align with the swift and results-focused production environment presents a considerable challenge.

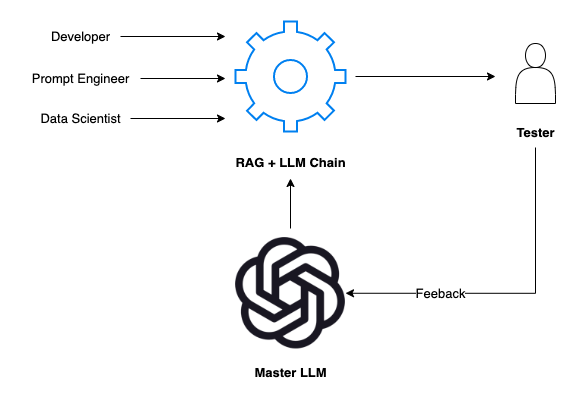

So if you fall into this case, you may think about something like a pseudo score that is provided by a master LLM. This score could reflect a combination of automated evaluation metrics and a distilled essence of human judgment. Such a hybrid approach aims to bridge the gap between the nuanced understanding of human evaluators and the scalable, systematic analysis of machine evaluation.

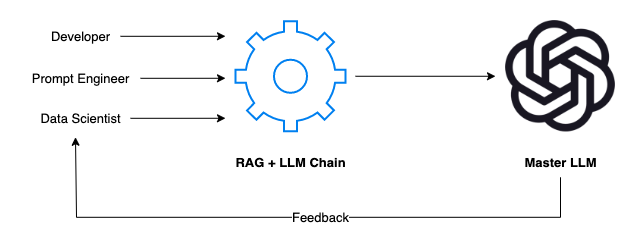

For example, if your team is developing an in-house LLM that's trained on your specific domain and data, the process will typically involve a collaborative effort from developers, prompt engineers, and data scientists. Each member plays a critical role:

- Developers are the architects. They construct the framework of the application, ensuring the RAG + LLM chain is seamlessly integrated and can navigate through different scenarios effortlessly.

- Prompt Engineers are the creatives. They devise scenarios and prompts that emulate real-world user interactions. They ponder the "what ifs" and push the system to deal with a broad spectrum of topics and questions.

- Data Scientists are the strategists. They analyze the responses, delve into the data, and wield their statistical expertise to assess whether the AI's performance meets the mark.

The feedback loop here is essential. As our AI responds to prompts, the team scrutinizes every output. Did the AI understand the question? Was the response accurate and relevant? Could the language be smoother? This feedback is then looped back into the system for improvements.

To take this up a notch, imagine using a master LLM like OpenAI's GPT-4 as a benchmark for evaluating your self-developed LLM. You aim to match or even surpass the performance of the GPT series, which is known for its robustness and versatility. Here’s how you might proceed:

- Generating a Relevant Dataset: Start by creating a dataset that reflects the nuances of your domain. This dataset could be curated by experts or synthesized with the help of GPT-4 to save time, ensuring it matches your gold standard.

- Defining Metrics for Success: Leverage the strengths of the master LLM to assist in defining your metrics. You have the liberty to choose metrics that best fit your goals, given that the master LLM can handle the more complex tasks. In the community standard, you may want to see some work from Langchain and some other libraries like ragas. They have some metrics like Faithfulness, context recall, context precision, answer similarity, etc.

- Automating Your Evaluation Pipeline: To keep pace with rapid development cycles, establish an automated pipeline. This will consistently assess the application's performance against your predefined metrics following each update or change. By automating the process, you ensure that your evaluation is not only thorough but also efficiently iterative, allowing for swift optimization and refinement.

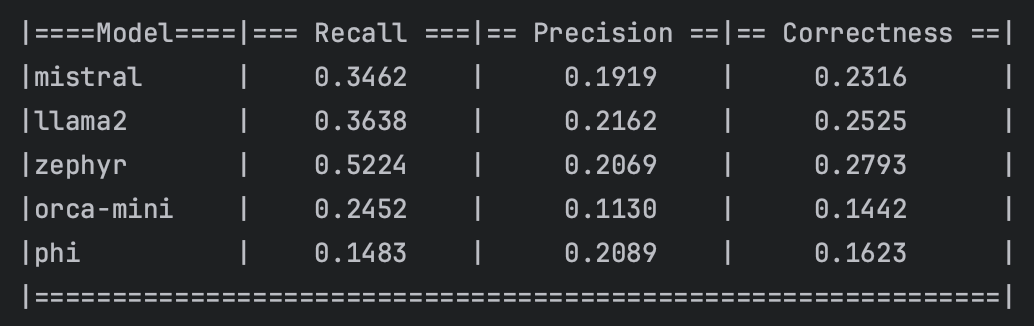

For instance, in the following demonstration, I will show you how to automatically evaluate various open-source LLMs on a simple document retrieval conversation task using OpenAI's GPT-4.

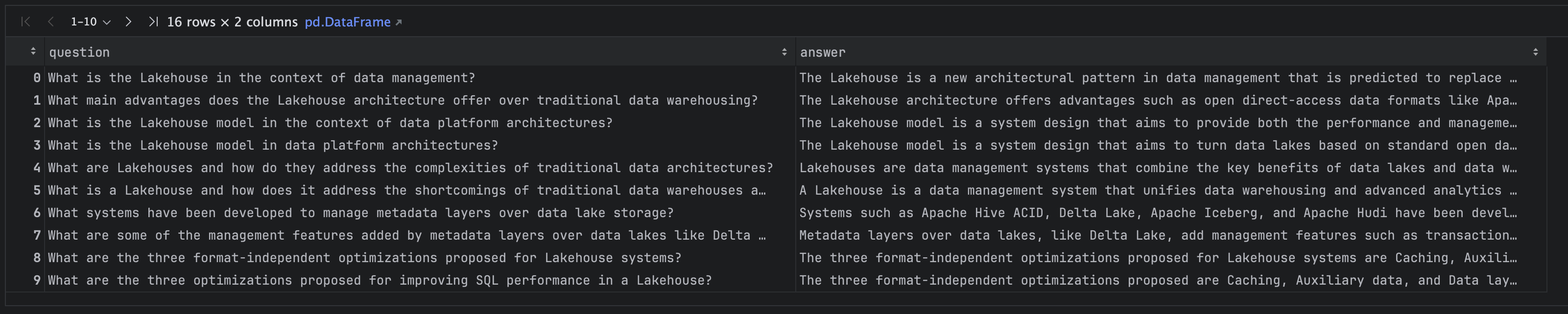

First, we utilize OpenAI GPT-4 to create a synthesized dataset derived from a document as shown below:

After running the code mentioned above, we obtain a CSV file as the result. This file contains pairs of questions and answers pertaining to the document we input, as follows:

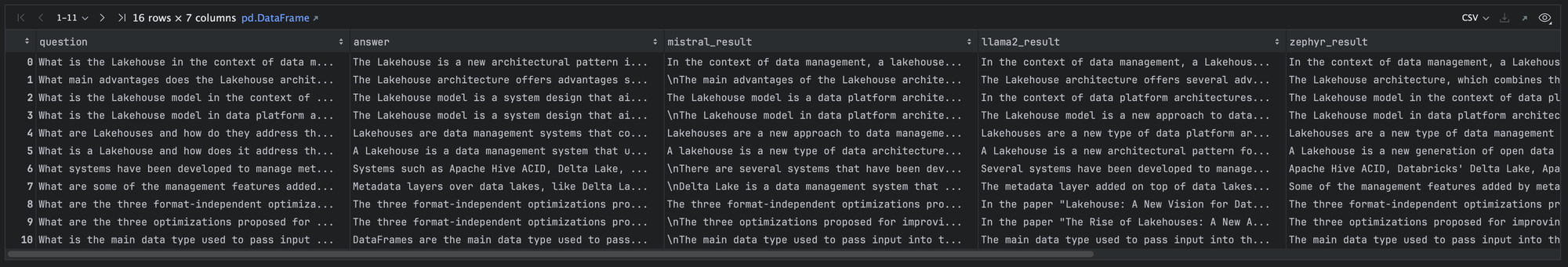

Then, we construct simple DocumentRetrievalQA chains using Langchain and substitute in several open-source LLMs that operate locally via Ollama. You can find my previous tutorial on that here.

In summary, the code above establishes a simple document retrieval chain. We execute this chain using several models, such as Mistral, Llama2, Zephyr, Orca-mini, and Phi. As a result, we add five additional columns to our existing DataFrame to store the prediction results of each LLM model.

Now, let's define a master chain using OpenAI's GPT-4 to evaluate the prediction results. In this setup, we will calculate a correctness score similar to an approximate F1 score, as is common in traditional AI/ML problems. To accomplish this, we'll apply parallel concepts such as True Positives (TP), False Positives (FP), and False Negatives (FN), defined as follows:

- TP: Statements that are present in both the answer and the ground truth.

- FP: Statements present in the answer but not found in the ground truth.

- FN: Relevant statements found in the ground truth but omitted in the answer.

With these definitions, we can compute precision, recall, and the F1 score using the formulas below:

$$\text{Precision}=\frac{TP}{TP + FP}$$

$$\text{Recall}=\frac{TP}{TP + FN}$$

$$\text{F1}=2\times\frac{\text{Precision}\times\text{Recall}}{\text{Precision}+\text{Recall}}$$

Okay, now we've got a simple benchmark for several models. This can be considered as a preliminary indicator of how each model handles the document retrieval task. While these figures offer a snapshot, they're just the start of the story. They serve as a baseline for understanding which models are better at retrieving accurate and relevant information from a given corpus. You can find the source code here.

Human-In-Loop-Feedback

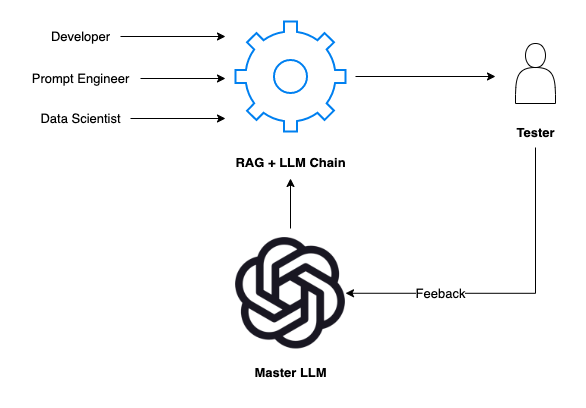

When it comes to tuning our AI through Human-In-Loop Feedback, the synergy between human testers and the Master LLM is pivotal. This relationship is not merely about gathering feedback but about creating a responsive AI system that adapts and learns from human input.

The Interactive Process

- The Tester's Input: Testers engage with the RAG + LLM Chain, assessing its outputs from a human perspective. They provide feedback on aspects like the relevance, accuracy, and naturalness of the AI's responses.

- Feedback to the Master LLM: This is where the magic happens. The feedback from human testers is communicated directly to the Master LLM. Unlike standard models, the Master LLM is designed to understand and interpret this feedback to refine its subsequent outputs.

- Prompt Tuning by Master LLM: Armed with this feedback, the Master LLM adjusts the prompt for our developmental LLM. This process is analogous to a mentor instructing a student. The Master LLM carefully modifies how the developmental LLM interprets and reacts to prompts, ensuring a more effective and contextually aware response mechanism.

The Dual Role of the Master LLM

The Master LLM functions both as a benchmark for the in-house developed LLM and as an active participant in the feedback loop. It assesses the feedback, tunes the prompts or the model parameters, and essentially 'learns' from human interactions.

The Benefit of Real-Time Adaptation

This process is transformative. It allows the AI to adapt in real-time, making it more agile and aligned with the complexities of human language and thought processes. Such real-time adaptation ensures that the AI's learning curve is steep and continuous.

The Cycle of Improvement

Through this cycle of interaction, feedback, and adaptation, our AI becomes more than just a tool; it becomes a learning entity, capable of improving through each interaction with a human tester. This human-in-the-loop model ensures that our AI doesn't stagnate but evolves to become a more efficient and intuitive assistant.

In summary, Human-In-Loop Feedback isn't just about collecting human insights—it's about creating a dynamic and adaptable AI that can fine-tune its behavior to serve users better. This iterative process ensures that our RAG + LLM applications remain at the cutting edge, delivering not just answers but contextually aware, nuanced responses that reflect a genuine understanding of the user's needs.

For a simple demo, you can watch how ClearML uses this concept to enhance Promptimizer in the video below:

Operation Phase

Transitioning into the Operation Phase is like moving from dress rehearsals to opening night. Here, our RAG + LLM applications are no longer hypothetical entities; they become active participants in the daily workflows of real users. This phase is the litmus test for all the preparation and fine-tuning done in the development phase.

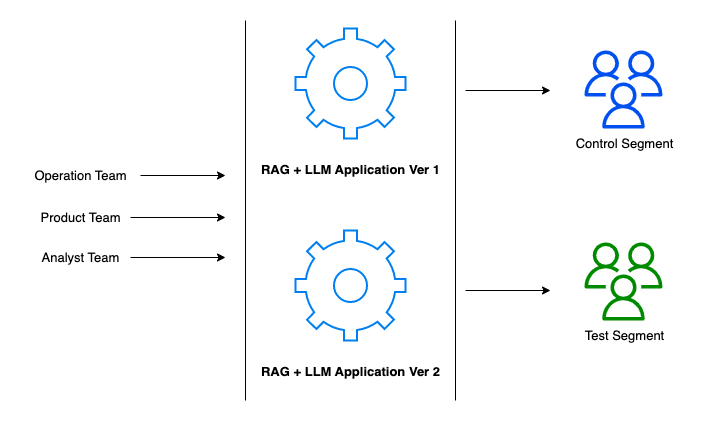

In this phase, our teams – operations, product, and analysts – align to deploy and manage the applications, ensuring that everything we've built is not only functioning but also thriving in a live environment. It's here that we can consider implementing A/B testing strategies to measure the effectiveness of our applications in a controlled manner.

- A/B Testing Framework: We split our user base into two segments – the control segment, which continues to use the established version of the application (Version 1), and the test segment, which tries out the new features in Version 2 (actually you can also run multiple A/B tests at the same time). This allows us to gather comparative data on user experience, feature receptivity, and overall performance.

- Operational Rollout: The operations team is tasked with the smooth rollout of both versions, ensuring that the infrastructure is robust and that any version transitions are seamless for the user.

- Product Evolution: The product team, with its finger on the pulse of user feedback, works to iterate the product. This team ensures that the new features align with user needs and the overall product vision.

- Analytical Insights: The analyst team rigorously examines the data collected from the A/B test. Their insights are critical in determining whether the new version outperforms the old and if it's ready for a wider release.

- Performance Metrics: Key performance indicators (KPIs) are monitored to measure the success of each version. These include user engagement metrics, satisfaction scores, and the accuracy of the application's outputs.

The operation phase is dynamic, informed by continuous feedback loops that not only improve the applications but also enhance user engagement and satisfaction. It's a phase characterized by monitoring, analysis, iteration, and above all, learning from live data.

As we navigate this phase, our goal is to not only maintain the high standards set by the development phase but to exceed them, ensuring that our RAG + LLM application remains at the forefront of innovation and usability.

Conclusion

In summary, the integration of Retrieval-Augmented Generation (RAG) and Large Language Models (LLMs) marks a significant advancement in AI, blending deep data retrieval with sophisticated text generation. However, we need a proper and effective method for evaluation and an iterative development strategy. The development phase emphasizes customizing AI evaluation and enhancing it with human-in-the-loop feedback, ensuring that these systems are empathetic and adaptable to real-world scenarios. This approach highlights the evolution of AI from a mere tool to a collaborative partner. The operational phase tests these applications in real-world scenarios, using strategies like A/B testing and continuous feedback loops to ensure effectiveness and ongoing evolution based on user interaction.