How I Built an Entire Engineering Team with Claude Code

How I built skills that turned Claude Code from a forgetful intern into a senior engineering team.

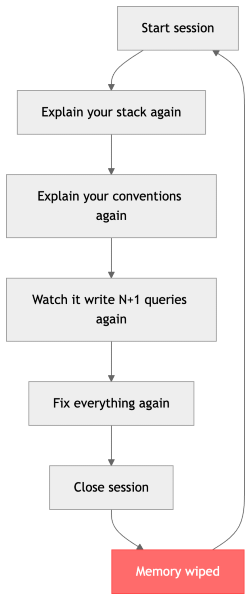

Last month, I fixed the same N+1 query three times. Three different sessions. Same project. Same table. Same bug.

Each time, I opened Claude Code, asked it to add a feature, and watched it write a query inside a loop. Each time, I corrected it. Each time, it said "good catch!" and fixed it. And each time, the next session started fresh — no memory, no lessons learned, same mistake again.

On the third time, I didn't fix the query. I closed my laptop, made coffee, and thought about what was actually broken.

It wasn't Claude. Claude is smart. The problem was that nobody taught it how I build software.

The Real Problem with AI Coding Tools

Here's what nobody talks about when they demo AI coding assistants: the output looks great in a 2-minute video, but it doesn't survive a real codebase.

I've been shipping production code for years. I know what good code looks like. And I kept seeing the same patterns from AI tools:

It forgets everything. Every session starts from zero. The conventions I explained yesterday? Gone. The architecture decisions we made last week? Never happened. It's like working with a brilliant colleague who gets amnesia every morning.

It writes code that works but doesn't scale. The classic: SELECT * FROM users without a LIMIT. A loop that fires a query per iteration. An O(n²) algorithm where a hash map gives you O(n). It's functional — and it's a ticking time bomb.

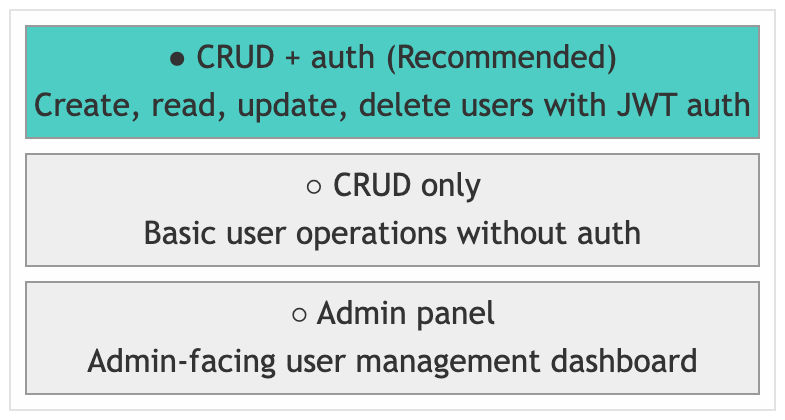

It rarely asks, often guesses. I type "add user management" and it builds an entire auth system with email verification, password reset, and admin panels. I wanted CRUD endpoints. But instead of asking, it assumed — and now I'm deleting 400 lines of code I never wanted.

Every session is different. Monday's session uses one response format. Tuesday's uses another. Wednesday it puts everything in one file. There's no consistency, no conventions, no standards. It's like hiring a different contractor every day.

Sound familiar?

The Moment It Clicked

After several incidents, I realized something: I was blaming the tool for not having instructions.

Claude Code has this feature called skills — reusable instruction sets that teach it how to approach tasks. Think of them as SOPs for your AI pair programmer. They persist across sessions, they encode your standards, and they activate automatically based on what you're doing.

What if I stopped re-explaining and started encoding? What if every convention, every pattern, every "don't do this" lived in a skill that Claude reads before it writes a single line?

So I built one skill. Then five. Then I couldn't stop.

An Engineering Department in Your CLI

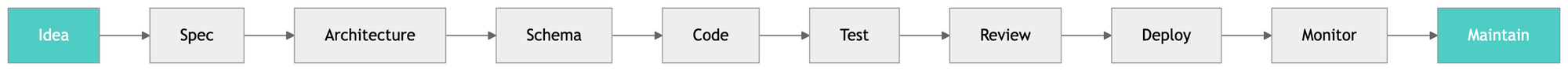

What started as "stop writing N+1 queries" turned into a full engineering pipeline — 36 skills that cover everything from product spec to production deploy.

Every stage has a skill. Every skill encodes senior engineering knowledge. Nothing is left to chance.

Here's how it works in practice.

Before: "Add user management"

Without skills, Claude would:

- Guess what "user management" means

- Pick random patterns for validation, pagination, error handling

- Write everything in one or two files

- Skip tests

- Leave N+1 queries everywhere

- Produce code that I'd spend hours fixing

After: "Add user management"

With the skills suite, here's what actually happens:

Step 1: The eng-lead skill activates (it's always on). Instead of guessing, it asks me:

I pick an option. No typing paragraphs of requirements. No back-and-forth. Just click.

Step 2: It routes to the right skills. Based on my answer, it chains: api-design → db-migrate → go-feature → review-code. Each skill knows exactly what to do.

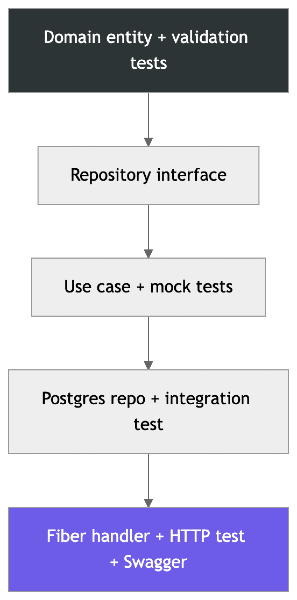

Step 3: The go-feature skill builds layer by layer. Test-driven, inside-out:

The Four Non-Negotiables

Every skill in the suite enforces four standards automatically. I never have to ask for them:

- Performance First

- O(n) algorithms

- Batch operations, no N+1 queries

- Pre-allocated collections

- Clean Architecture

- Domain isolation

- Clear dependency rules

- Layers that don't leak

- Secure by Design

- Parameterized queries

- Input validation

- Auth middleware, no hardcoded secrets

- Test-Driven Always

- Failing test written first

- Every layer, every time

These are NOT optional. NOT "nice to have." They're enforced at every stage by every skill.

The agent never asks "should I add tests?" or "should I use parameterized queries?" Those questions are answered permanently. It focuses its questions on things that actually matter — product decisions, scope, trade-offs.

The Skill That Changed Everything: claude-md

Remember my N+1 query problem? Three sessions, same mistake?

The claude-md skill fixes this permanently. Every skill in the suite is wired to update the project's CLAUDE.md file after making changes. But the killer feature is the Gotchas section:

## Gotchas

- The users table has a unique constraint on (org_id, email), not just email

- Don't use time.Now() in domain — inject a Clock interface

- Redis session keys expire after 24h — don't cache user objects longer

- The /api/v1/events endpoint is paginated — always pass limit/offset

When I correct the agent, it records the correction. When debugging reveals a project-specific pitfall, it gets documented. The next session reads these gotchas before writing any code.

The agent never makes the same mistake twice.

This is the difference between a tool that forgets and a tool that learns. CLAUDE.md is the project's permanent memory — every session inherits every lesson from every previous session.

How Skills Connect

The eng-lead skill is always active. It listens to every message, asks clarifying questions via interactive-clarify, and routes to the right skills based on your intent.

Planning a product? It chains product-spec → system-design → data-model → adr. You go from a vague idea to a fully documented architecture with database schema and decision records.

Starting a new project? It runs go-scaffold (or py-scaffold) + react-scaffold, then automatically sets up docker-build → ci-pipeline → onboarding to generate your Dockerfiles, CI config, and project docs.

Building a feature? It chains api-design → db-migrate → go-feature + react-feature in parallel, with integration-test skills running alongside. Every feature skill enforces code-quality, security, and TDD automatically.

Ready to commit? The review-code skill launches four agents in parallel — performance, architecture, security, and code quality — and auto-fixes anything critical before you push.

Something breaks? It routes to debug for hands-on investigation (Docker inspect, curl, psql, browser debugging), escalating to incident-response if it's production.

Maintenance? fullstack-healthcheck runs diagnostics across your entire stack and routes to dep-update, go-refactor, py-refactor, or react-refactor based on what it finds.

And through all of this, claude-md quietly keeps your project's CLAUDE.md in sync — so the next session starts exactly where this one left off.

Real Talk: What This Actually Feels Like

Before the skills suite, working with Claude Code felt like pair programming with someone brilliant but unreliable. You'd explain something, they'd nail it, and then tomorrow they'd have no idea what you talked about.

Now it feels like working with a senior engineer who:

- Asks smart questions instead of guessing

- Follows your conventions without being reminded

- Writes tests first because that's just how things are done

- Reviews their own code before showing you

- Remembers every mistake and never repeats them

- Knows when to check in and when to just execute

I still make all the product decisions. I still own the architecture. But the execution? That's handled.

The Numbers

Since adopting the skills suite:

| Before | After |

|---|---|

| Re-explain conventions every session | Encoded once, applied forever |

| N+1 queries in every PR | Caught and fixed automatically |

| No tests unless I ask | Tests first, every layer |

| Security added as afterthought | Secure by design from scaffold |

| Different patterns every session | Consistent architecture always |

| Agent guesses, builds wrong thing | Agent asks, builds right thing |

| Same mistake across sessions | Mistakes recorded, never repeated |

Try It Yourself

The entire suite is open source:

git clone https://github.com/vndee/engineering-skills.git

cd engineering-skills

mkdir -p ~/.claude/skills

for skill in .claude/skills/*/; do

name=$(basename "$skill")

ln -sf "$(pwd)/$skill" "$HOME/.claude/skills/$name"

done

It's built for my stack (Go/Fiber, Python/FastAPI, React/Vite), but every skill is a standalone Markdown file you can fork and adapt. Using Django? Edit py-scaffold. Using Vue? Edit react-scaffold. The patterns transfer — clean architecture, TDD, performance standards — those are universal.

The skills suite is open source at github.com/vndee/engineering-skills. PRs welcome — especially if you adapt skills for different stacks.

Built with Claude Code, obviously.