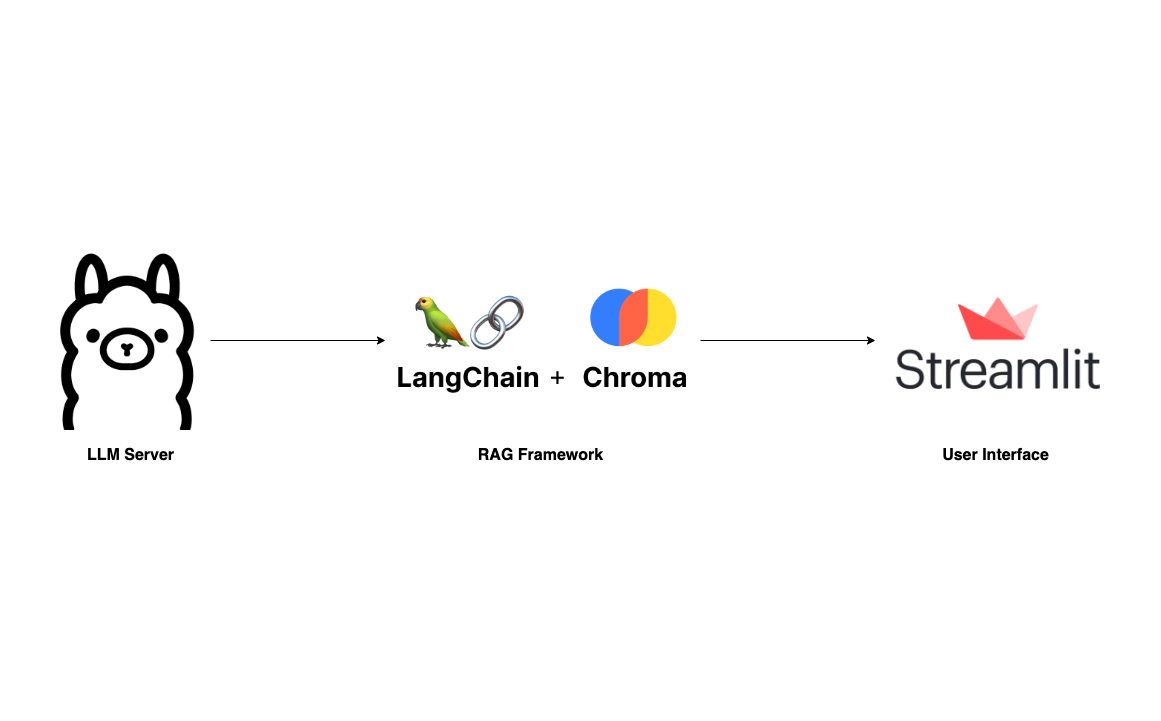

Build your own RAG and run it locally: Langchain + Ollama + Streamlit

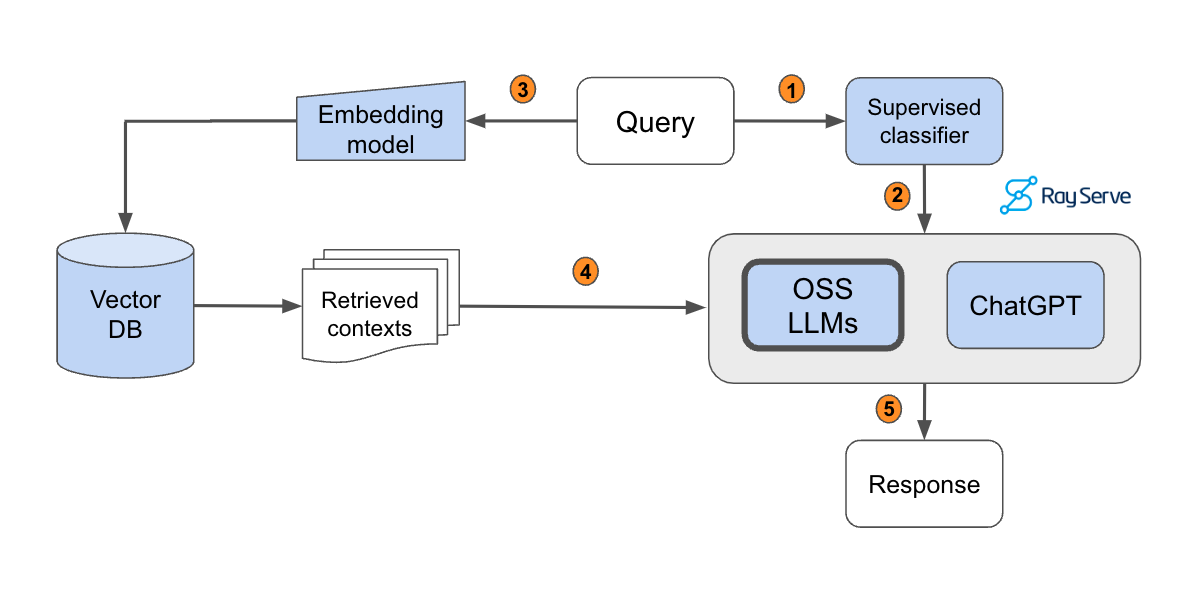

With the rise of Large Language Models and its impressive capabilities, many fancy applications are being built on top of giant LLM providers like OpenAI and Anthropic. The myth behind such applications is the RAG framework, which has been thoroughly explained in the following articles:

To become familiar with RAG, I recommend going through these articles. This post, however, will skip the basics and guide you directly on building your own RAG application that can run locally on your laptop without any worries about data privacy and token cost.

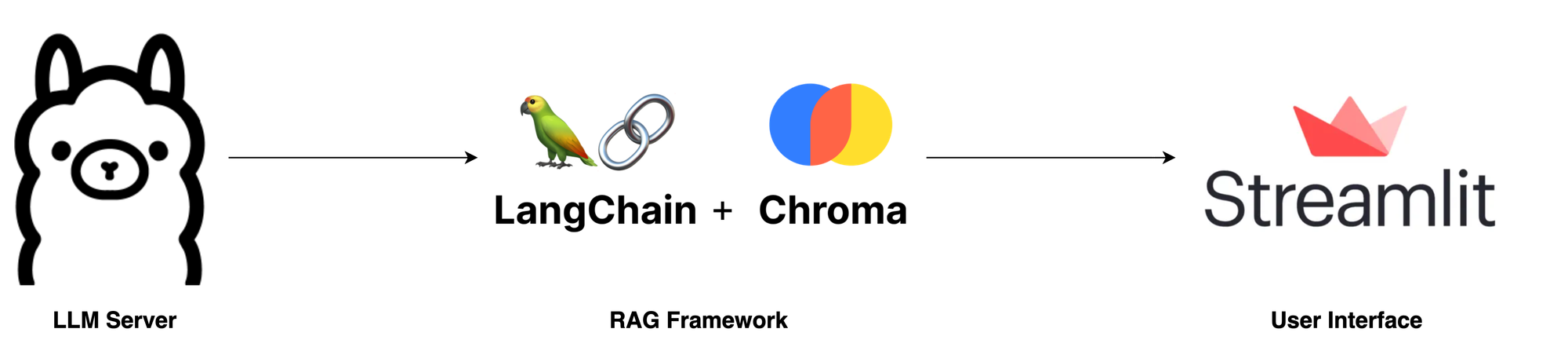

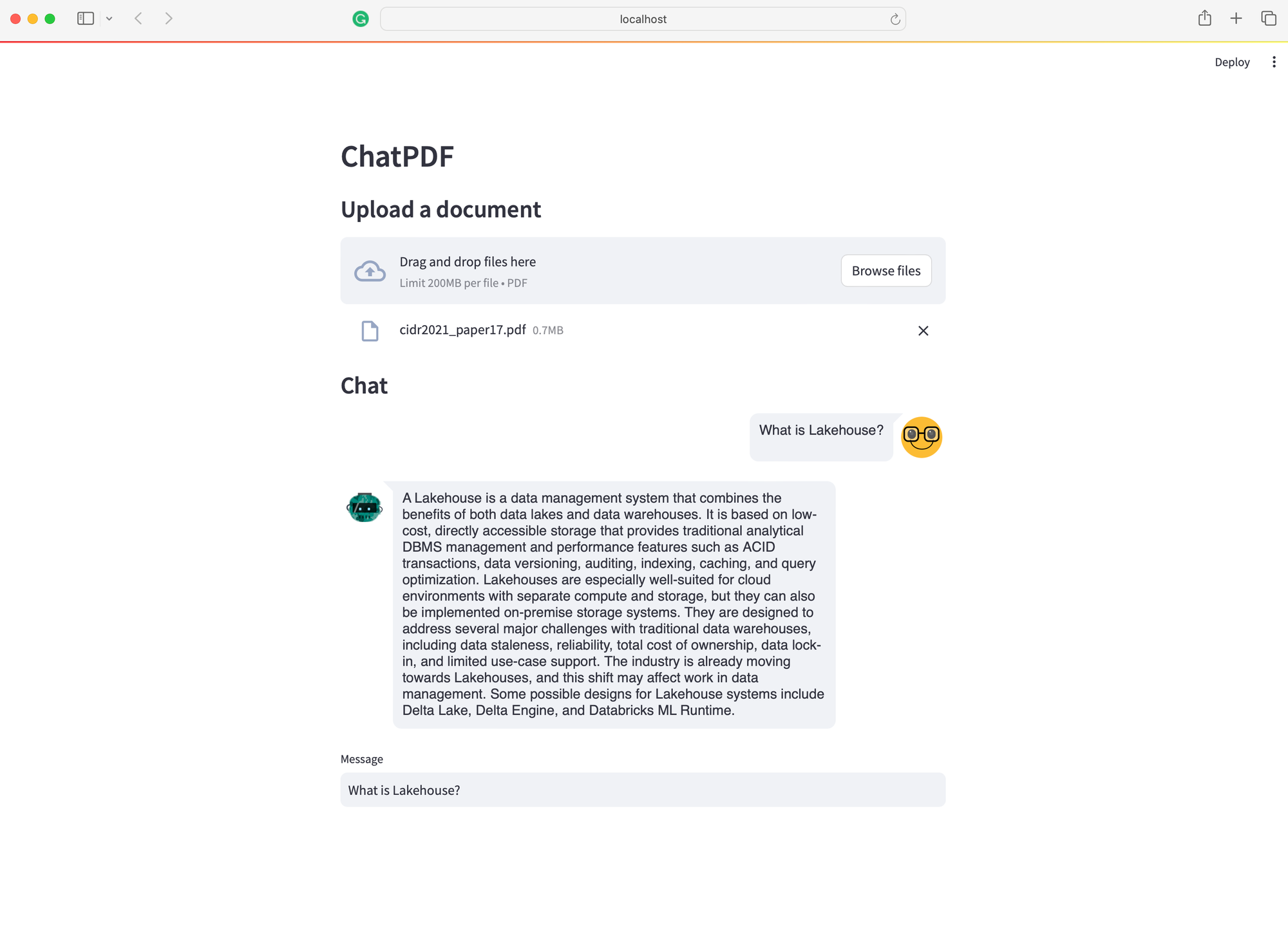

We will build an application that something similar to ChatPDF but simpler. Where users can upload a PDF document and ask questions through a straightforward UI. Our tech stack is super easy with Langchain, Ollama, and Streamlit.

- LLM Server: The most critical component of this app is the LLM server. Thanks to Ollama, we have a robust LLM Server that can be set up locally, even on a laptop. While llama.cpp is an option, I find Ollama, written in Go, easier to set up and run.

- RAG: Undoubtedly, the two leading libraries in the LLM domain are Langchain and LLamIndex. For this project, I'll be using Langchain due to my familiarity with it from my professional experience. An essential component for any RAG framework is vector storage. We'll be using Chroma here, as it integrates well with Langchain.

- Chat UI: The user interface is also an important component. Although there are many technologies available, I prefer using Streamlit, a Python library, for peace of mind.

Okay, let's start setting it up

Setup Ollama

As mentioned above, setting up and running Ollama is straightforward. First, visit ollama.ai and download the app appropriate for your operating system.

Next, open your terminal and execute the following command to pull the latest Mistral-7B. While there are many other LLM models available, I choose Mistral-7B for its compact size and competitive quality.

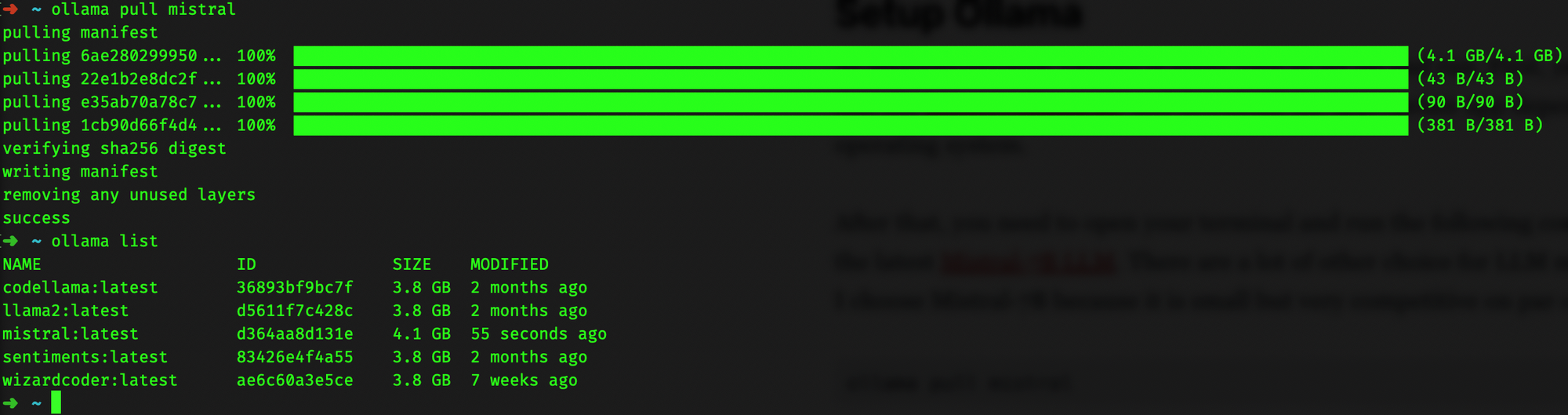

ollama pull mistralAfterward, run ollama list to verify if the model was pulled correctly. The terminal output should resemble the following:

Now, if the LLM server is not already running, initiate it with ollama serve. If you encounter an error message like "Error: listen tcp 127.0.0.1:11434: bind: address already in use" it indicates the server is already running by default, and you can proceed to the next step.

Build RAG pipeline

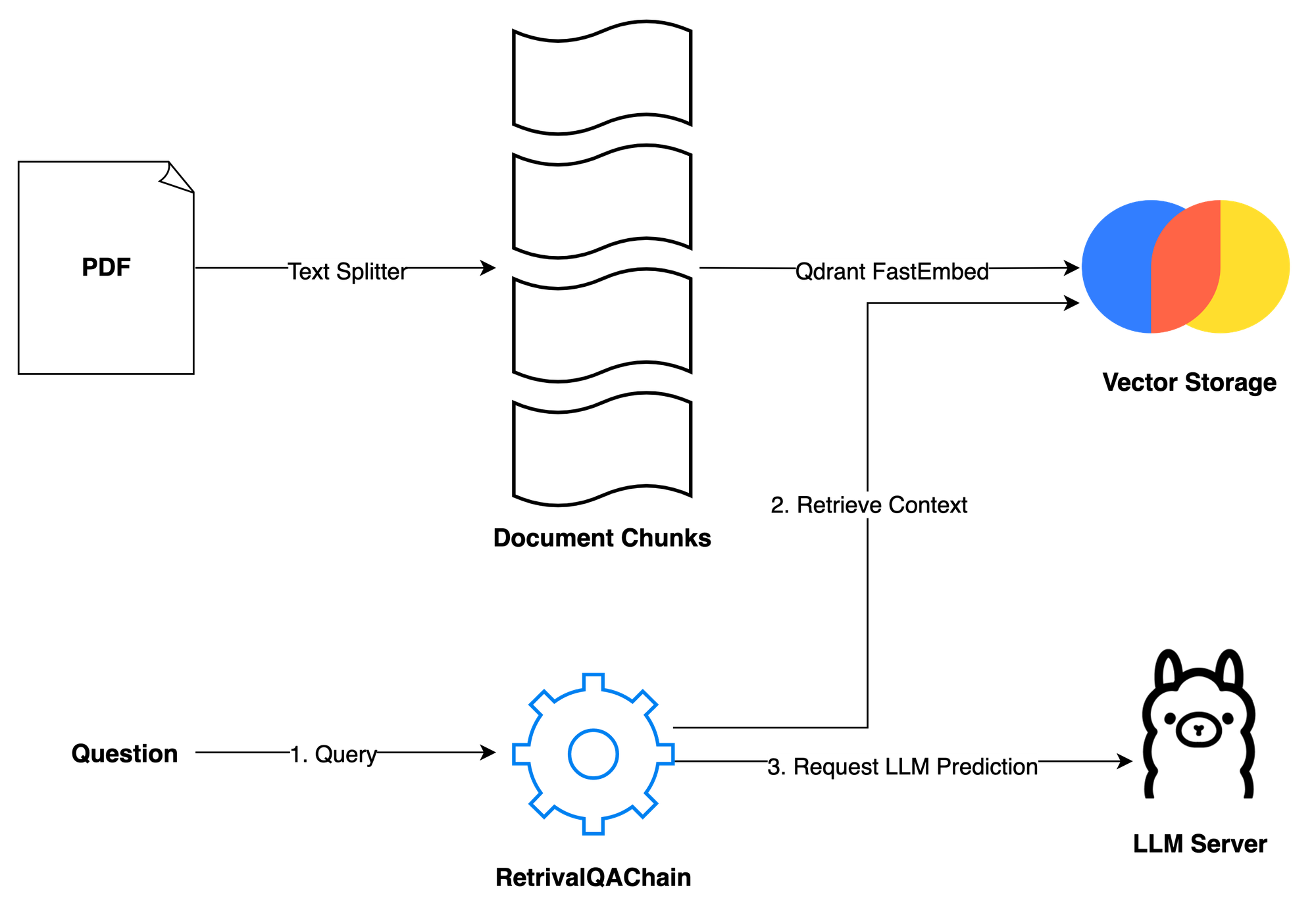

The second step in our process is to build the RAG pipeline. Given the simplicity of our application, we primarily need two methods: ingest and ask. The ingest method accepts a file path and loads it into vector storage in two steps: first, it splits the document into smaller chunks to accommodate the token limit of the LLM; second, it vectorizes these chunks using Qdrant FastEmbeddings and store into Chroma. The ask method handles user queries. Users can pose a question, and then the RetrievalQAChain retrieves the relevant contexts (document chunks) using vector similarity search techniques. With the user's question and the retrieved contexts, we can compose a prompt and request a prediction from the LLM server.

The prompt is sourced from the Langchain hub: Langchain RAG Prompt for Mistral. This prompt has been tested and downloaded thousands of times, serving as a reliable resource for learning about LLM prompting techniques. You can learn more about LLM prompting techniques here.

More details on the implementation:

ingest: We use PyPDFLoader to load the PDF file uploaded by the user. The RecursiveCharacterSplitter, provided by Langchain, then splits this PDF into smaller chunks. It's important to filter out complex metadata not supported by ChromaDB using thefilter_complex_metadatafunction from Langchain. For vector storage, Chroma is used, coupled with Qdrant FastEmbed as our embedding model. This lightweight model is then transformed into a retriever with a score threshold of 0.5 and k=3, meaning it returns the top 3 chunks with the highest scores above 0.5. Finally, we construct a simple conversation chain using LECL.ask: This method simply passes the user's question into our predefined chain and then returns the result.clear: This method is used to clear the previous chat session and storage when a new PDF file is uploaded.

Draft simple UI

For a simple user interface, we will use Streamlit, a UI framework designed for fast prototyping of AI/ML applications.

Run this code with the command streamlit run app.py to see what it looks like.

Okay, that's it! We now have a ChatPDF application that runs entirely on your laptop. Since this post mainly focuses on providing a high-level overview of how to build your own RAG application, there are several aspects that need fine-tuning. You may consider the following suggestions to enhance your app and further develop your skills:

- Add Memory to the Conversation Chain: Currently, it doesn't remember the conversation flow. Adding temporary memory will help your assistant be aware of the context.

- Allow multiple file uploads: it's okay to chat about one document at a time. But imagine if we could chat about multiple documents – you could put your whole bookshelf in there. That would be super cool!

- Use Other LLM Models: While Mistral is effective, there are many other alternatives available. You might find a model that better fits your needs, like LlamaCode for developers. However, remember that the choice of model depends on your hardware, especially the amount of RAM you have 💵

- Enhance the RAG Pipeline: There's room for experimentation within RAG. You might want to change the retrieval metric, the embedding model,.. or add layers like a re-ranker to improve results.

Finally, thank you for reading. If you find this information useful, please consider subscribing to my Substack or my personal blog. I plan to write more about RAG and LLM applications, and you're welcome to suggest topics by leaving a comment below. Cheers!

Full source code: https://github.com/vndee/local-rag-example